Monitor websites with Grafana, Prometheus and Blackbox Exporter

Aron Schüler Published

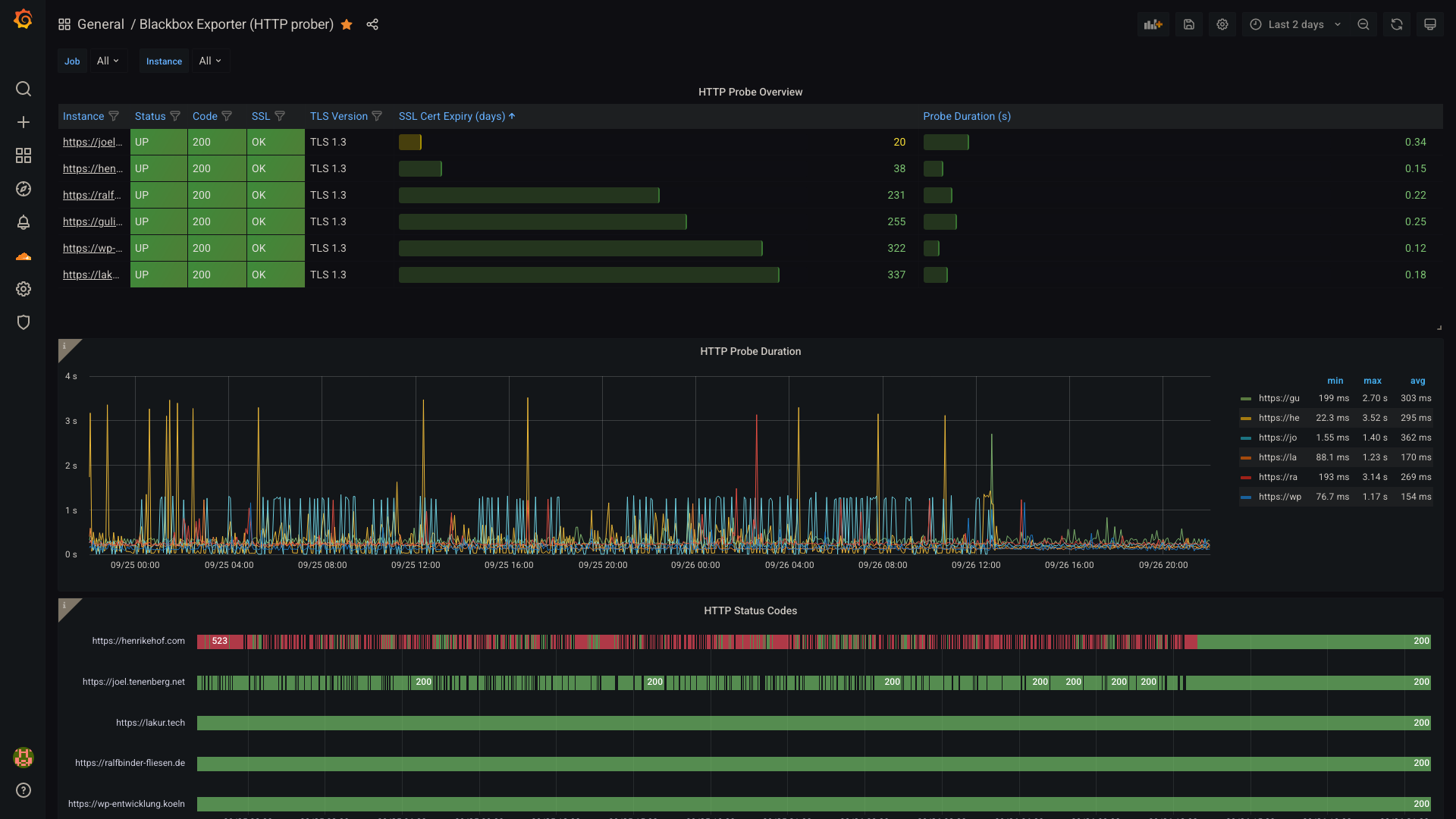

This post will describe how to setup Grafana, Prometheus and the Blackbox exporter quick and easy, allowing you to monitor your websites.

Grafana is a great tool to monitor data, usually fed through a timeseries database like InfluxDB or Prometheus. I use it in combination with prometheus to create all kinds of of dashboards. One dashboard monitors ads blocked by my pihole, another monitors the water level of all the different rivers I fish in.

I also set up a monitor for websites hosted by me, where I can see when SSL

certificates will expire, the duration of each sent HTTP probe as well as its

HTTP status code over a given time.

Prerequisites

As always, I am going to use Docker where I can. I just don’t see a point doing installing anything on my hosts/servers anymore. In comparison, docker images are more reliable, easier to upgrade and much easier to move to a new system. Don’t think so? I’d love to hear your opinion down in the comments.

With docker, we also get three running services with all the necessary configuration exposed by writing one file and running one command. You cannot beat that with traditional installs, can you?

Let’s jump into the file, the docker-compose.yml, which sets up the

three services necessary.

Configuring the Containers with Docker-Compose

version: "3.3"

services:

grafana:

image: grafana/grafana:latest

restart: unless-stopped

volumes:

- ./grafana/data:/var/lib/grafana

ports:

- "8080:3000"

prometheus:

image: prom/prometheus:latest

restart: unless-stopped

volumes:

- ./prometheus/prometheus.yml:/etc/prometheus/prometheus.yml

- ./prometheus/data:/data:rw

command: --config.file=/etc/prometheus/prometheus.yml --storage.tsdb.path=/data

ports:

- "9090:9090"

blackbox:

image: prom/blackbox-exporter:master

restart: unless-stopped

volumes:

- ./blackbox/blackbox.yml:/etc/blackbox/blackbox.yml

command: --config.file=/etc/blackbox/blackbox.yml

user: 1000:1000

ports:

- "9115:9115"Note that this configuration exposes all running services. We could also put the services into their own network and then only expose grafana, but thats beside the point of this post.

You have to manually create the folders for storing service data though, as they require some manually set permissions. I believe that this could be circumvented by mounting the path and letting the containers create the folders, but I didn’t get to test this yet.

So create the folders as follows:

mkdir -p grafana/data

chown 472:root grafana/data

mkdir -p prometheus/data

chown nobody:nogroup prometheus/data # or alternatively 99:99 as UID/GID

mkdir blackboxConfiguring the services

Configuring Grafana

Well… Actually grafana doesn’t need any configuring. Later, we will add the dashboard I am using, but not before all other services are configured and running.

If you’re keen, you can configure grafana to allow anonymous logins and give anonymous users the admin privileges, if you plan to run it in your own network and are aware that this creates a huge attack vector as grafana runs as root (if someone wants to invest the time to own you over a sandbox escape anyways).

Configuring Prometheus

Prometheus collects data and stores it in its TSDB, a timeseries-database. For it to work properly, we need to configure what it scrapes and how often. This is done by creating `prometheus/prometheus.yml` and inserting the following:

global:

scrape_interval: 15s # By default, scrape targets every 15 seconds.

evaluation_interval: 15s # By default, scrape targets every 15 seconds.

# scrape_timeout is set to the global default (10s).

scrape_configs:

- job_name: "prometheus"

# Override the global default and scrape targets from this job every 5 seconds.

scrape_interval: 5s

static_configs:

- targets: ["localhost:9090"]

- job_name: "httpd"

scrape_interval: 60s

metrics_path: /probe

params:

module: [http_2xx]

static_configs:

- targets:

- https://lakur.tech

- https://example.com

relabel_configs:

- source_labels: [__address__]

target_label: __param_target

- source_labels: [__param_target]

target_label: instance

- target_label: __address__

replacement: blackbox:9115This configures prometheus to scrape its own statistics every 5 seconds. The targets on line 19 and line 20 are scraped over the blackbox exporter. Basically, the blackbox exporter does not scrape the targets itself, but gets a command from prometheus every 60 seconds to scrape the given target.

Replace the targets on line 19/20 with your targets you want to monitor.

Configuring Blackbox Exporter

As the blackbox exporter does not define its own targets, we rather configure

how it will scrape targets received through promtheus requests. As we want to

only scrape basic HTTP statistics, we don’t need to dive into other

probers like tcp or icmp, but just one basic http prober. Put the

following into blackbox/blackbox.yml:

modules:

http_2xx:

prober: http

timeout: 5s

http:

method: GET

preferred_ip_protocol: "ip4" # defaults to "ip6"I discovered that blackbox uses IPv6 by default when one of the configured targets just was unreachable, as this network had not been setup with IPv6 before. We set this to IPv4 for legacy reasons, if all your targets use IPv6 you can also go with IPv6.

Starting the containers

After you created both configuration files in all three directories, you can run

start your docker containers with docker-compose up -d.

If you’re not running them on localhost, you need to configure your firewall so that you can reach the host’s port 8080 from your current web browser. Need help? Comment below.

Setting up Grafana

Now that you can reach grafana you will be greeted with a login screen. The default credentials are admin:admin. Change the password in the next view and proceed to the Home view of your grafana instance.

Now we need to setup our first datasource, prometheus.

Add prometheus as a datasource

Select Configuration > Data sources in the left menu to access following screen:

Click Add data source, choose Prometheus in the following screen. If you plan to use my dashboard you must name it like my datasource for the import to work flawlessly, that would be Local Prometheus.

You also have to set the URL under HTTP settings.

For a prometheus instance running on the same server, this should be

http://localhost:9090. Normally we could enter http://prometheus:9090 since

this would be the container, but somehow the resolver seems to ignore the

running service and Grafana will report 502: Bad Gateway errors.

You can try https://prometheus:9090, click on Save & Test. If it

doesn’t work you will notice the error appearing above said button.

Ideally, you should see:

Now we are good to proceed to import our dashboard!

Add the dashboard

Select the + button in the left menu and choose Import:

Now you will be prompted to either upload your own JSON, import from grafana.com or paste some JSON into the textbox. The dashboard shown in this posts first image is a modified version of https://grafana.com/grafana/dashboards/13659, which you can import over the link or the id 13659.

If you want my modified version, including the awesome HTTP Status Code timeline in the bottom of the screenshot, go and import my JSON from https://lakur.tech/p/blackboxexporterdashboard.json. Be aware, you might need to change each occurrence of “Local Prometheus” if you named your datasource not like mine.

Done!

You should be good to go now and have a beautiful dashboard, monitoring all your offered websites.